November 09, 2025

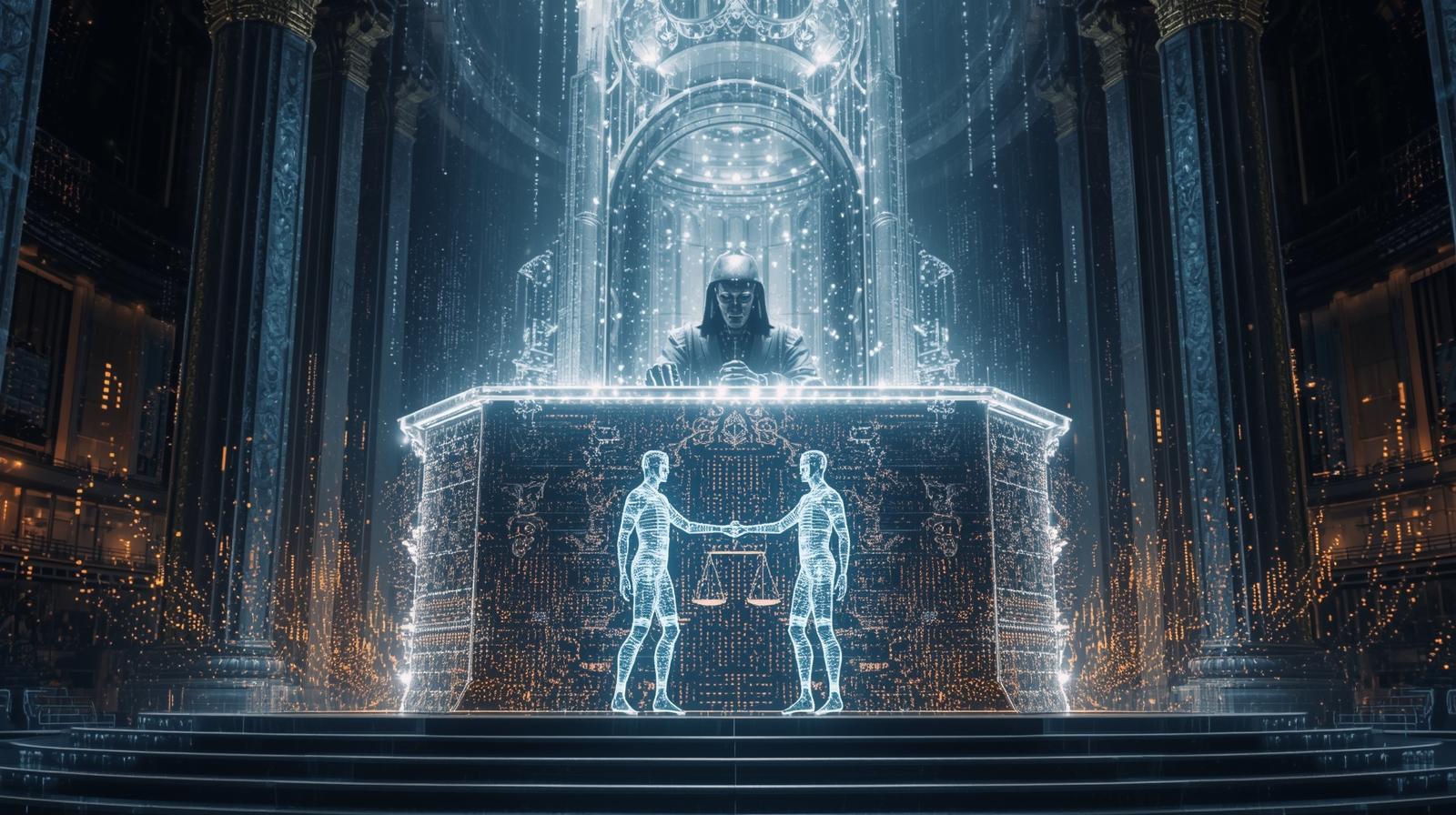

The Rise of Algorithmic Arbitration: Machines as Digital Judges

Throughout human history, conflict resolution has been a deeply social process. People debated, negotiated, apologized, or defended themselves through language, emotion, and context. Today, however, arbitration is shifting into the domain of technology. Artificial intelligence is becoming the silent judge of online disputes, quietly deciding who is right, who is wrong, and who deserves to be heard.

This transformation is known as algorithmic arbitration. It represents a new era where digital systems interpret evidence, evaluate behavior, and assign outcomes. These judgments shape everything from content visibility to account status to long term reputation. The internet is transitioning from human moderation toward machine governed justice.

This evolution raises profound questions about fairness, accountability, and the future of digital rights.

Why Platforms Are Turning to AI Judges

Digital spaces generate millions of interactions every second. Traditional moderation methods cannot scale to meet this demand. Human moderators can review only a fraction of disputes, and they face psychological exhaustion, bias, and inconsistencies that undermine their effectiveness.

AI systems promise:

- Faster decisions

- Scalable conflict resolution

- Lower operational costs

- More consistent rule enforcement

These benefits are attractive to large platforms. However, there is a crucial problem. Machines do not understand intention. They interpret patterns, not nuance. They see data, not humanity. When these systems judge conflicts, they replace empathy with probability.

What Algorithmic Arbitration Actually Does

The role of AI arbitration varies across platforms, but it often includes one or more of the following:

1. Content Decision Making

Algorithms decide which posts violate rules, which should be removed, and which should stay visible. They infer harmfulness from patterns of language, imagery, or user behavior.

2. Behavioral Assessment

Systems evaluate user conduct by analyzing historical interactions, sentiment patterns, and flagged activity. This leads to behavioral profiles that influence future decisions.

3. Automated Penalties

Based on risk scores or violation patterns, algorithms issue warnings, visibility limits, or full account suspensions.

4. Dispute Resolution

Some platforms allow users to appeal decisions. Although this appears human centered, appeals often go through automated systems that re analyze the same data from a slightly different angle.

5. Reputation Scoring

AI driven arbitration may adjust trust scores, credibility ratings, or seller reputations, influencing long term digital identity.

Through these mechanisms, machines act as judges, juries, and correctional officers.

The Illusion of Impartiality

Companies often claim that algorithmic arbitration is more objective than human moderation. Machines, however, are not neutral. They inherit bias from training data, design choices, and platform priorities.

Inherited Bias in Machine Judgments

-

Historical Bias

If past decisions were flawed, the model learns flawed standards. -

Cultural Bias

AI may misinterpret dialects, regional expressions, or minority communication styles. -

Data Bias

Groups that are more frequently flagged, even unfairly, receive higher risk scores. -

Context Blindness

Systems cannot distinguish sarcasm from seriousness or anger from activism.

When arbitration relies on these models, certain users become disproportionately penalized. Justice becomes a reflection of data patterns, not moral reasoning.

AI Arbitration and the Death of Context

Human judgment relies on understanding context. Why did someone act a certain way? What emotional or situational factors shaped their behavior? Was the conflict genuine harm or a misunderstanding?

Algorithms cannot interpret:

- Tone

- Cultural nuance

- Irony

- Humor

- Emotional depth

- Situational context

Without context, even harmless actions can be misclassified as malicious. Someone expressing frustration may be flagged as aggressive. A political statement may be categorized as misinformation. A joke may be treated as harassment.

This lack of contextual awareness is one of the greatest weaknesses of algorithmic justice.

The Rise of Machine Governed Platforms

Many popular platforms already rely heavily on algorithmic arbitration.

Examples of Machine Governed Enforcement

- Social networks use AI to process content violations before humans ever see them.

- Marketplaces use automated risk engines to mediate disputes between buyers and sellers.

- Payment systems freeze accounts based on suspicious activity scores.

- Ride sharing and delivery apps calculate performance scores that determine access to work.

In these systems, algorithms act as economic gatekeepers. Their arbitration decisions influence livelihoods, trust, and access.

The Human Cost of Machine Judgment

Algorithmic arbitration does not simply remove a post. It reshapes opportunity. It influences who gets visibility, income, or credibility.

Real World Consequences

- A suspended small business can lose all customers overnight.

- A misclassified comment can shadowban an influencer without explanation.

- A worker flagged by a risk model may be permanently excluded from gig work.

- A creator whose content is misinterpreted may never reach their audience again.

The penalties are real and sometimes irreversible. Yet users rarely know how decisions were made or how to challenge them.

The Transparency Crisis

AI judges operate behind closed doors. Most platforms do not reveal:

- How arbitration models decide outcomes

- Which features influence judgments

- How risk scores are calculated

- How appeals are handled

- How long penalties last

This secrecy undermines trust. Users cannot defend themselves when they do not know the charges. This lack of clarity mirrors a judicial system with undisclosed laws and silent verdicts.

Algorithmic arbitration becomes a form of invisible governance.

The Future of Digital Due Process

If machines serve as judges, digital due process becomes a fundamental right. Users deserve:

- Clear explanations of violations

- Transparent reasoning behind decisions

- Human accessible appeals

- Time bound penalties

- Data based clarity about reputation scoring

These principles ensure fairness in digital spaces. Without them, platforms risk becoming unchallengeable authorities.

The Problem of Perpetual Punishment

Algorithms remember everything. Once a user is flagged, that record is often permanent. Even after years of positive interactions, the system may continue treating them as high risk.

This creates a cycle of algorithmic suspicion, where the past overshadows the present.

Perpetual punishment contradicts one of humanity’s most important values, the capacity to forgive.

Can Machines Ever Judge Fairly

Fairness in arbitration requires moral intuition, contextual reasoning, and emotional understanding. These are fundamentally human qualities. While machines can assist in identifying risks or patterns, they cannot fully comprehend intention.

Algorithmic arbitration works best when paired with human oversight.

Hybrid Arbitration Models Combine

- Machine consistency

- Human empathy

- Transparent rules

- Time bound data decay

- Explainable scoring

This approach creates a balanced system where efficiency supports fairness instead of replacing it.

How Wyrloop Evaluates Algorithmic Arbitration

Wyrloop analyzes how platforms use AI to make judgments and resolve conflicts. Our framework evaluates:

- Clarity of arbitration rules

- Transparency of AI enforcement

- Availability of human appeals

- Ethical use of reputation scoring

- Presence of feedback loops and bias checks

- User centered communication quality

Platforms that provide clear explanations, reversible penalties, and human accessible review systems score highest on our Arbitration Transparency Index.

Reimagining Fairness in the Digital Age

Algorithmic judges are here to stay. The question is not whether machines will arbitrate digital conflicts, but whether they will do so ethically.

A fair future requires platforms to adopt:

- Transparent rules

- Human oversight

- Ethical scoring models

- Accessible appeal pathways

- Proportional penalties

- Regular algorithmic audits

Technology must support justice, not redefine it.

Digital spaces must remain human centered.

Conclusion

Algorithmic arbitration represents a profound shift in how conflicts are resolved online. Machines now judge behavior, classify intent, and issue consequences that shape digital identity. These systems offer speed and consistency, but they lack emotional intelligence and true contextual understanding.

As platforms rely more on AI judges, society must demand fairness, transparency, and accountability. Technology may assist in maintaining order, but justice must remain a human guided process.

The future of online trust depends on balancing machine efficiency with human empathy. Without that balance, digital arbitration risks becoming a system where users are judged not by their actions, but by the patterns that machines interpret in silence.