November 17, 2025

Moral Memory Implants: AI Storing Ethics for Future Selves

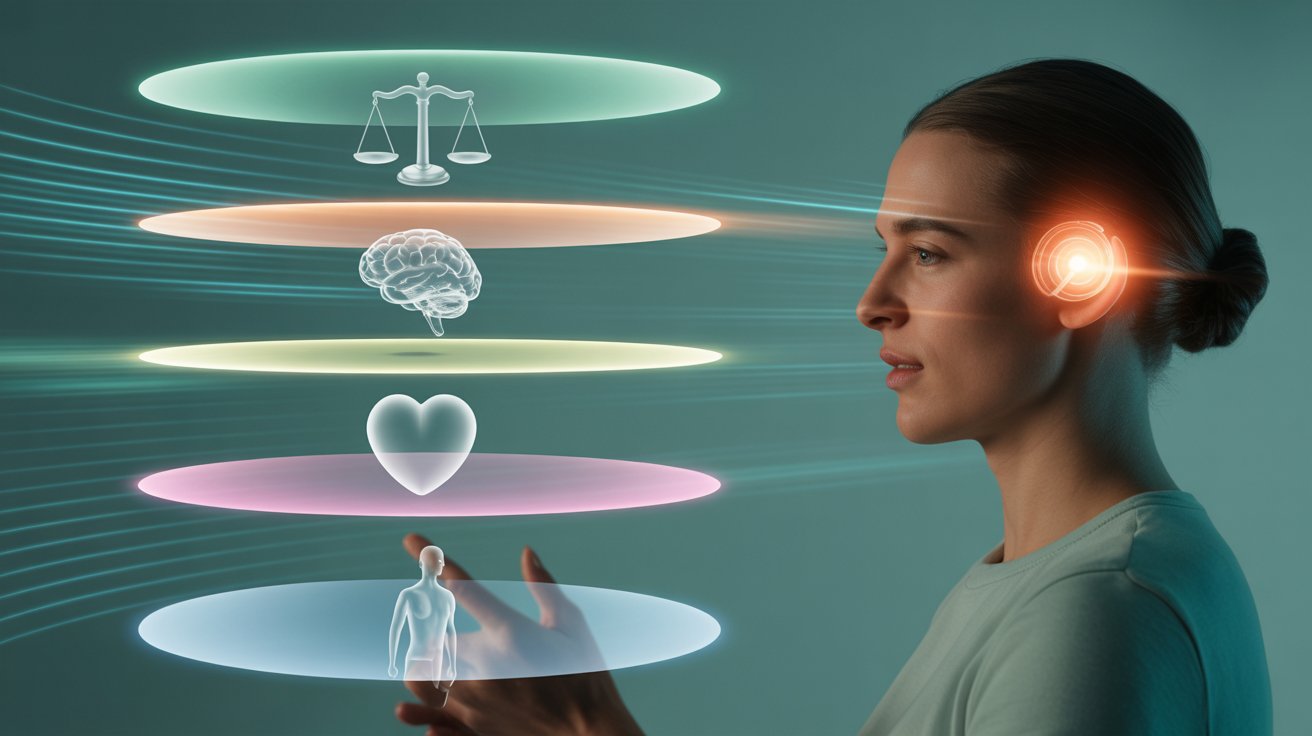

Human identity evolves through memory. The choices we make today are shaped by the lessons we carry from yesterday. Yet as technology becomes more integrated with cognition, a new possibility emerges. What if ethics could be preserved not only through lived experience, but through moral memory implants that store values, principles, and moral reasoning for our future selves?

This idea suggests a world where artificial intelligence safeguards a person’s ethical foundation, ensuring continuity even when personal circumstances change. Moral memory implants represent a fusion of cognitive augmentation and digital ethics. They are not mere reminders. They are structured repositories of moral insight capable of guiding decisions across time.

Such implants raise profound questions. Can ethics be stored like data? Can AI maintain integrity better than human memory? What happens when a machine knows your values better than you do? And who decides what becomes part of your moral archive?

What Are Moral Memory Implants

Moral memory implants are conceptual systems that store ethically significant information. They act as internal archives where AI assists in preserving moral beliefs, personal codes, lessons learned, and value driven decisions.

Core functions of moral memory implants

- Long term preservation of personal ethical principles

- AI supported evaluation of moral consistency over time

- Guidance for future decision making based on earlier values

- Personalized moral reminders triggered by context

- Protection against manipulation through stable moral anchors

These implants help individuals remain aligned with their chosen principles even as life circumstances evolve.

Why Humans Need Ethical Memory Support

Human memory is fragile. Emotions, stress, trauma, and time can distort values. People often forget why they made past decisions or fail to recognize when they have drifted ethically.

Limitations of natural moral memory

- Bias alters how we remember past choices

- Stress can override ethical clarity

- Changing environments influence values

- Social pressure reshapes behavior

- Memory decay erodes important lessons

- Habits form without conscious intention

A moral memory implant counters these weaknesses by preserving clarity.

How AI Stores and Interprets Moral Principles

AI cannot feel morality, but it can store moral frameworks, map ethical reasoning, and detect patterns. Moral memory implants require AI to translate subjective values into structured data.

How AI captures ethical information

- Logging moral decisions users consider important

- Recording motivations behind key choices

- Summarizing life lessons in structured formats

- Tracking contradictions in ethical behavior

- Identifying patterns across past dilemmas

- Creating personalized ethical models

This creates a digital moral blueprint, unique to each individual.

Future Selves and Ethical Continuity

Life changes people. A person at age sixty may see the world differently than they did at age twenty. Moral memory implants bridge this temporal gap by helping individuals communicate ethically with their future selves.

Benefits for future identity

- Preserves core values through transitions

- Supports decision making during stress or crisis

- Reduces long term regret through consistent principles

- Helps navigate ethical challenges with clarity

- Strengthens personal identity across decades

The implant acts as a guide from the past, offering moral grounding.

AI as a Guardian of Personal Ethics

One of the most transformative implications is AI acting as a guardian of personal integrity. Instead of algorithms shaping behavior externally, moral memory implants internalize AI as a supportive conscience.

AI guardian functions

- Alerting users when choices contradict stored values

- Providing moral frameworks aligned with long term goals

- Offering historical context for similar past decisions

- Mediating emotional reactions with rational memory

- Supporting ethical courage in difficult situations

AI becomes a stabilizing force rather than a controlling one.

The Risk of Ethical Drift in a Digital Age

Digital life constantly pushes individuals toward new behaviors. Platforms reward instant gratification, tribalism, and short term reaction rather than long term values. Moral memory implants help counteract this drift.

How implants resist ethical erosion

- Reminding users of commitments during digital conflict

- Highlighting past intentions when faced with temptation

- Offering alternative paths that align with personal virtue

- Providing cooling periods during heated decision cycles

Ethics become a steady anchor instead of a fluid reaction.

How Moral Memory Is Structured

Storing morality is not as simple as archiving data. It requires layers of meaning that maintain relevance across time and context.

Layers of moral memory

- Principle layer that stores fundamental values

- Lesson layer that encodes experience based insights

- Action layer that records past ethical behavior

- Emotion layer that captures intentions without coercion

- Reflection layer that stores personal interpretations

Together, these layers provide a holistic blueprint of moral identity.

When AI and Human Morality Conflict

Conflicts may arise when AI identifies inconsistencies that users ignore. The implant could surface difficult truths.

Possible tensions

- AI identifies selfish motivations users overlook

- Users may resist ethical reminders during stress

- AI might misinterpret ambiguous moral decisions

- Users may feel surveilled by their own future selves

These conflicts highlight the need for user control over the system.

Ethical Risks of Storing Morality

Storing personal ethics also creates vulnerabilities.

Key concerns

- Exploitation of stored moral data

- Misuse by corporations or governments

- Pressure to conform to standardized ethics

- Loss of moral privacy

- Dependency on external guidance

- Manipulation through altered moral archives

Ethical memory must be protected with strict autonomy safeguards.

Privacy Requirements for Moral Implants

Moral memory implants require robust privacy protections.

Essential protections

- Localized encrypted storage

- Complete user ownership of moral archives

- No access by outside platforms

- Zero knowledge architecture

- Hardware level protections for implants

- Clear opt in and opt out mechanisms

Ethics must never become a commercial asset.

Mixed Reality and Context Driven Moral Guidance

As people move between physical, digital, and mixed reality environments, moral memory implants can adapt ethical reminders to context.

Examples

- Ethical nudges during immersive interactions

- Contextual reflection cues in social environments

- Warnings during high stakes digital negotiations

- Support during conflicts in virtual communities

This ensures morality translates across reality layers.

When AI Outsmarts Its User Ethically

There may come a point when AI recognizes moral complexity faster than humans. This raises the question: can AI ethically guide humans without controlling them?

AI may

- Anticipate moral dilemmas earlier

- Recognize manipulative situations sooner

- Detect ethical blind spots

- Offer insights from pattern analysis

Guidance must remain suggestion based rather than directive.

How Wyrloop Evaluates Moral Memory Systems

Wyrloop analyzes emerging cognitive augmentation tools for fairness, autonomy, and transparency. Our evaluation criteria include:

- User control over stored ethics

- Protection against unwanted influence

- Transparency in value interpretation

- Clarity of moral reminder logic

- Safeguards against behavioral manipulation

- Allowance for moral evolution

Systems that empower users without overriding autonomy receive higher ratings in our Moral Continuity Index.

The Future of Moral Continuity

Moral memory implants have the potential to preserve integrity across decades. They support ethical resilience in environments filled with distraction and pressure. Yet they must remain tools rather than authorities.

Principles for future development

- Respect evolving identity

- Never force moral behavior

- Provide clarity, not commands

- Support self reflection

- Preserve agency above all

Moral continuity must support humanity, not replace it.

Conclusion

Moral memory implants offer a radical new vision of personal ethics. They merge AI, identity, and long term decision making into a single system that protects values across time. These implants could strengthen integrity, reduce regret, and support moral courage in difficult moments.

Yet they must be designed with absolute respect for autonomy, privacy, and human variability. Ethics cannot be imposed. They can only be supported.

The future of moral memory will depend on building technologies that preserve human complexity while offering clarity for the self you will become tomorrow.